Underwater soundscape data analysis will soon be more efficient. Dr. James Locascio, Mote Marine Laboratory, was awarded funding from SECOORA to use previously collected marine acoustic data to develop machine-learning algorithms that identify biological, geophysical, and anthropogenic sounds.

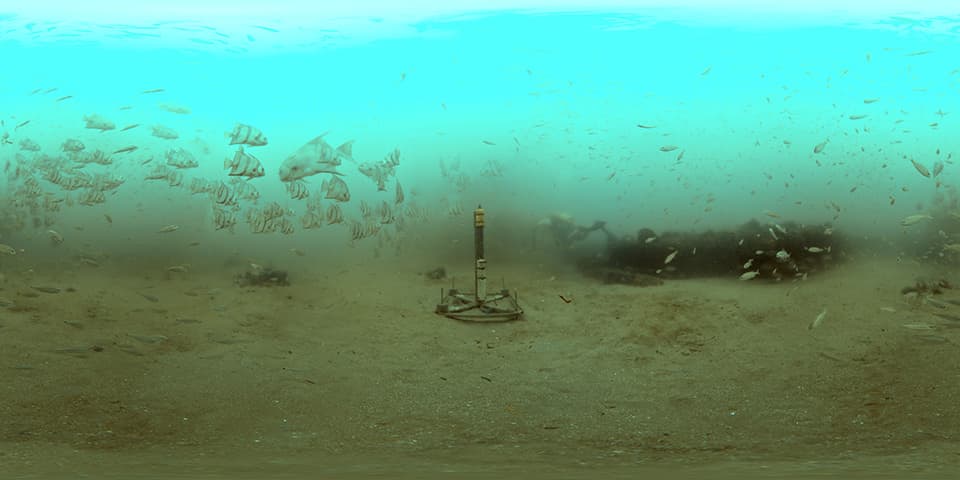

The marine acoustic data being analyzed were recorded with hydrophones in marine protected areas and other marine habitats. A hydrophone is an underwater technology that detects and records ocean sounds from all directions. Underwater sounds are made by fish, marine mammals, hurricanes, and people (such as engines from small boats to transoceanic ships) all make noise. The soundscapes created by this diverse array of sources are rich in information about species presence and behavior, habitat quality and habitat use patterns, anthropogenic activity and environmental conditions.

Mote Marine Laboratory

“Funding provided for this project will help us develop more efficient methods to scale up and speed up the processing of massively large data sets of sound files to produce practically useful products,” Locascio said. “As part of this project we will also collect additional acoustic data at locations known or suspected to be spawning aggregation sites of important commercial fish species and install acoustic sensors on autonomous platforms used for studies of harmful algal blooms.”

With SECOORA’s support over the next two years, hundreds of thousands of archived acoustic files that were collected from different habitat types will be analyzed by Locascio and his team, which includes staff from Mote and Loggerhead Instruments. The team will develop machine-learning algorithms that can be trained to automatically identify species-specific sounds. These algorithms will be able to classify and label sounds such as marine mammals, fish, invertebrates, and anthropogenic noise such as boat engines. Unknown sources will also be classified, distinguishing them as either biological or anthropogenic in origin.

Gliders and autonomous underwater vehicles (AUVs) will be used to conduct mobile surveys to collect new data as well. This work intersects with Mote’s Harmful Algal Bloom (HAB) research efforts. As the Locascio’s group and the HABs team plan to collect acoustic data in areas where blooms occur, Locascio’s team will add sensors to the gliders and AUVs to compare soundscapes of affected and unaffected areas and track animals carrying acoustic tags.

The advances Locascio and his team make in machine learning for processing acoustic files will be shared with SECOORA and the IOOS Data Management and Communications community.

The team will also make existing data and results accessible and useful to stakeholders, and work with the South Atlantic Fisheries Management Council and Gulf of Mexico Fisheries Management Council to determine the best way that their results can be applied to help inform management decisions for the region.

Related news

Scientist Spotlight: Dr. Frank Muller-Karger

Meet Dr. Frank Muller-Karger, a Biological Oceanographer and Distinguished University Professor at the USF College of Marine Science and co-lead of the U.S. Marine Biodiversity Observation Network. His research integrates satellite data, environmental DNA, and physical sensors to better understand how warming oceans are influencing marine populations.

Webinar | The Sound of Resilience? Listening to Estuaries in a Changing World

Join us on November 5, 2026, at 12:00 PM ET to explore sound is transforming our ability to monitor, understand, and communicate estuarine ecosystem health in a rapidly changing world.

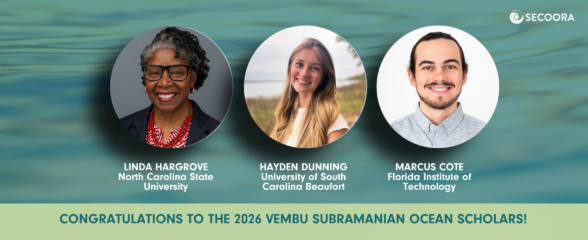

Meet the Recipients for the 2026 Vembu Subramanian Ocean Scholars Award

Meet the 2026 Vembu Subramanian Ocean Scholars! This year’s scholars will attend the 2026 SECOORA Annual Meeting in Atlantic Beach, NC, where they will be paired with experienced mentors who will help support networking, career conversations, and connections within our community.